2025-03-053 min

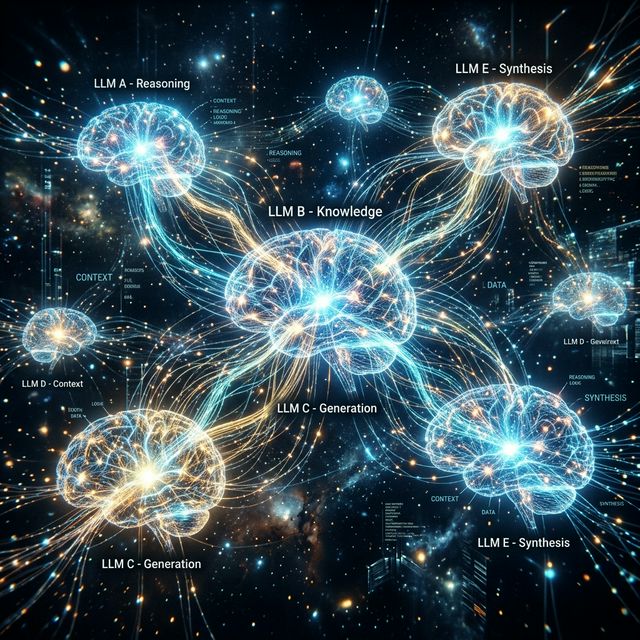

Multi-LLM: Why Your AI Needs a Backup Plan

The Single Provider Problem

If your AI agent depends on a single LLM provider and that provider goes down, your entire customer service operation stops. For businesses handling thousands of daily conversations, even 30 minutes of downtime means lost sales and frustrated customers.

How Multi-LLM Routing Works

LINK automatically routes requests across multiple LLM providers:

- Groq — Ultra-fast inference for real-time conversations

- Google Gemini — Strong multilingual and reasoning capabilities

- SambaNova — High-throughput processing for peak loads

- Cerebras — Lightning-fast response times

When one provider experiences issues, LINK seamlessly redirects traffic. Your customers never notice the switch.

Intelligent Routing

- Latency-based: Routes to the fastest responding provider

- Cost-optimized: Balances performance with cost efficiency

- Context-aware: Maintains conversation context across provider switches

The Bottom Line

Multi-LLM architecture isn't a luxury — it's a requirement for any business that takes AI-powered customer service seriously.